Artificial intelligence is one of the most talked-about technologies of our time.

Depending on who you listen to, it will either:

- Transform society for the better

- Or disrupt jobs, privacy, and truth itself

Most discussions focus on specific issues—job loss, misinformation, bias, loss of control. It’s natural to assume that people weigh these concerns one by one and arrive at an overall opinion.

That’s what I expected.

That’s not what I found.

A Different Pattern Emerges

I conducted a brief public poll using a convenience internet sample of 47 respondents (median age 35–44; 51% with at least a four-year college degree). I asked people about:

- Whether AI will harm or help society

- Their personal use of AI

- Trust in AI across different domains

- Concerns about specific risks

- Their expectations for the future

What emerged was not a collection of separate opinions.

It was something much more coherent.

As people move from believing AI will harm society to believing it will help, several things increase consistently:

- Frequency of AI use

- Expectation that AI will improve their personal lives

- Trust in AI across domains

- General optimism about the future

At the same time, concern about risks decreases.

This pattern is not random—it is structured and consistent across multiple variables.

AI Attitudes Are Not Piece-by-Piece

What this suggests is something important:

People are not forming opinions about AI issue by issue.

Instead, they appear to adopt a general stance, either more optimistic or more concerned, and that stance shapes everything else. This kind of pattern is well known in psychology. Once people form a general attitude, their beliefs about specific issues tend to align with it.

Once someone leans in one direction:

- They tend to trust AI more broadly

- Use it more often

- See more benefit

- And perceive less risk

Or the reverse.

Fear Moves Together

The pattern becomes clear when the results are viewed across the full spectrum of responses:

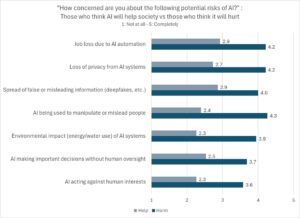

This chart shows how concern about specific risks changes across the same spectrum.

As belief in AI shifts toward “help,” concern decreases across all of the following:

- Misinformation and manipulation

- Loss of privacy

- Job loss due to automation

- AI making decisions without human oversight

- Environmental impact

What’s striking is not just the decrease, it’s the consistency. These are all separate issues. There is no reason why concern over AI’s environmental impact would be associated with concern over privacy. But it is.

All of these concerns move together.

Fear of AI Is Generalized

This suggests another key insight:

People are not distinguishing sharply between different types of AI risk.

Instead, they appear to have a general level of concern that gets applied across multiple issues.

Someone who is worried about AI tends to be worried about many aspects of it, not just one.

Someone who is comfortable with AI tends to be comfortable across the board.

A Surprising Non-Finding: Job Loss

One of the most commonly discussed concerns about AI is job displacement.

It’s often assumed that fear of losing one’s job would strongly influence attitudes toward AI.

But in this data:

Concern about one’s own job being replaced was not a major factor in whether someone viewed AI as helpful or harmful.

This is a striking result.

It suggests that people are not forming their overall views based primarily on economic self-interest.

Instead, broader psychological factors, such as optimism, trust, and comfort with change, may be playing a larger role.

Another Surprise: Who You Are Doesn’t Predict What You Think

There’s another assumption that didn’t hold up.

We often expect differences based on demographics. Younger and better educated adults are often expected to be more accepting of new technology.

But in this dataset:

Age and education were not meaningfully related to whether people believed AI would harm or help society.

This reinforces the idea that AI attitudes are not primarily demographic.

They are psychological.

A Psychological Interpretation

Taken together, these findings point toward a simple but powerful idea:

People relate to AI through a general orientation toward the future.

Some people:

- Feel optimistic

- Believe they can adapt

- Are open to new technologies

Others:

- Feel more cautious

- Focus on potential downsides

- Are less trusting of emerging systems

AI becomes a focal point for these broader tendencies.

What This Means

If these patterns hold in larger samples, they have important implications:

- Public opinion about AI may be less about specific policies or risks than we think

- Efforts to build trust may need to address general confidence and perceived control, not just technical safeguards

- Discussions about AI could benefit from recognizing that people are not always evaluating it analytically, they are responding to it holistically

Final Thought

We often talk about AI as if people are carefully weighing its pros and cons.

But this data suggests something different:

People don’t just evaluate AI.

They relate to it.

And that relationship shapes everything else.

What Do You Think?

Do you see your own views about AI as:

- A set of specific concerns and trade-offs?

- Or more of a general feeling about where technology, and the future, is heading?